Posted: March 29, 2016 at 1:46 pm, Last Updated: January 27, 2017 at 3:27 pm

Social media platforms have become extremely popular during the past few years, presenting an alternate, and often preferred, avenue for information dissemination within massive global communities. Such user-generated multimedia content is emerging as a critical source of information for a variety of applications, and particularly during times of crisis. In order to fully explore this potential, there is a need to better assess, and improve when possible, the accuracy of such information. This paper addresses this issue by focusing in particular on user-contributed image tagging in Flickr. We use as case study a natural disaster event (wildfire), and assess the reliability of user-generated tags. Furthermore, we compare these data to the results of a content-based annotation approach in order to assess the potential performance of an alternative, user-independent, automated approach to annotate such imagery. Our results show that Flickr user annotations can be considered quite reliable (at the level of ~50%), and that using a spatially distributed training dataset for our content-based image retrieval (CBIR) annotation process improves the performance of the content-based image labeling (to the level of ~75%).

|

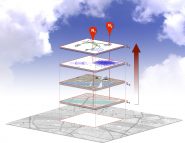

| Study methodology for comparing user-annotated to CBIR-annotated Flickr imagery |

|

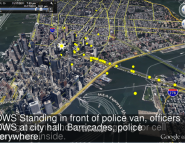

| Sample of training set images (yellow) and the retrieved fire images (green). |

Full reference:

Panteras, G., Lu, X., Croitoru, A., Crooks, A.T. and Stefanidis, A. (2016), Accuracy Of User-Contributed Image Tagging In Flickr: A Natural Disaster Case Study. In Gruzd, A., Jacobson, J., Mai, P., Ruppert, E. and Murthy , D. (eds), Proceedings of the 7th International Conference on Social Media and Society, London, UK. (pdf)